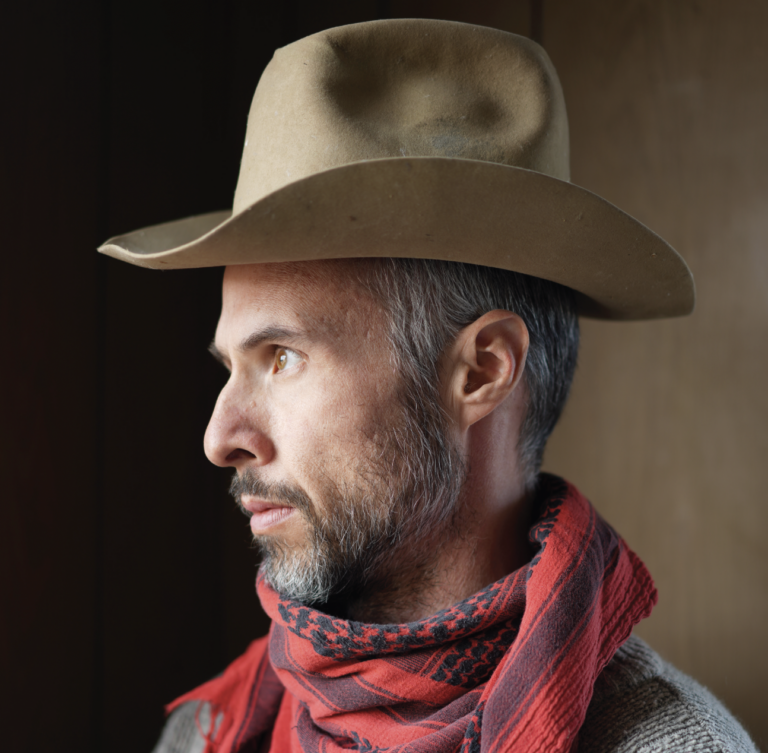

HIS OFFICE LOOKS EXACTLY LIKE YOU’D EXPECT. Piles of important-looking paper everywhere, books along the wall with titles I can’t understand, and a chalkboard filled with what might as well be Sanskrit.

Chris Moore is a professor at the Santa Fe Institute, who works, as he says, on “problems at the interface of physics, computer science, and mathematics, such as phase transitions in statistical inference.” That means he’s studying a lot of things, like social networks, big data, physics-powered inference, the diversity of language, well, you get the idea. He’s pretty smart.

Today I’m here at the Santa Fe Institute on Hyde Park Road, interrupting his work to talk about Artificial Intelligence. Not how Watson can make our businesses better, but how AI is impacting our daily lives — in ways we would never expect.

You are part of a Working Group on Algorithmic Justice. What is that?

I work on transparency and accountability in algorithmic decision-making in what is called high-stakes decisions. This includes housing, employment, and lending. And in criminal justice it includes bail risk assessment for pretrial defendants and even sentencing and parole considerations.

So banks’ lending, landlord approvals, and courts use algorithms to make decisions?

Yes. I’m trying to demystify machine learning and statistics so that we can all make critical decisions about whether and how algorithms should be deployed.

These algorithms decide whether you will be a trustworthy tenant or borrower. Should you be given pretrial bail? It’s all a point system based on past behavior. It produces a score which is given to a judge and they use it to make a decision.

Are these widespread?

With criminal justice, 40 states use them.

Now, when I first started studying this, I thought, This is terrible, algorithms are putting us in jail! But it turns out, as often as not, they work as reminders to judges that a defendant may not be as bad a risk as they may think.

But here’s the problem. In pretrial risk assessment, there are two algorithms you can use. One is called PSA – Public Safety Assessment – a non-profit transparent algorithm. The other is called Compas, which is private and basically a black box. We don’t know how Compas is making decisions. And that’s bad. In our system, you should be able to confront your accusers. But you can’t cross examine an algorithm.

Landlords use these, so you may apply for an apartment and the landlord comes back and says the algorithm only rated you a 6 out of 10. And you have no recourse.

But even the best algorithms simply use statistics. They use data from the past to predict the future. So these are all averages, but don’t we want to be treated as individuals?

Of course, judges also have their own biases, so it’s not like algorithms are bad and humans are good. But the algorithms need to be fully transparent, so we can have an informed discussion about how these decisions should be made. When constitutional rights are at stake, there’s no excuse for using something that is opaque.

Why would someone use Compas?

When its hidden, it’s easier for companies to hype it, to make it seem like magic. It has the illusion of being sophisticated and objective.

WANT TO READ MORE? SUBSCRIBE TO SANTA FE MAGAZINE HERE!

Photo SFM